duplicated inspects to suspect columns to produce more focused results The Subset Argumentīest for: narrowing the scope of the rows that. To achieve this, we use the subset argument. In some scenarios, it makes sense to limit our search for duplicates to specific columns instead of the entire DataFrame. Instead of marking the fourth and fifth rows, we have now identified the initial occurrences of the duplicate data as True in the first and second rows. duplicated by changing the argument to "last": If you want to keep the last instance of a duplicate row, you can invert the default behavior of. duplicated with no keep argument specified in the original example. We can see the fourth and fifth rows are marked as duplicates, which matches the result when we executed. We can confirm the method performed as expected by viewing the printout: duplicated(), but it's still important to understand how it works. Since "first" is the default keep argument, we don't need to include it when calling. duplicated method that we want to mark the first and subsequent occurrences of any duplicated row as False and mark the last instance as True: The default value for keep is "first." This tells the. We can see the original DataFrame and the boolean Series printouts below.Īs expected, the first and second rows that were marked False in our last example are now marked True since their data matches the duplicate rows in the fourth and fifth positions. Now, any two or more rows that share the same data will all be marked True in the output. duplicated we don't want to "keep" any duplicates. duplicated() with the argument keep set to the boolean False. To do this, we turn to the keep argument: Let's say we want to flag the origin row and any subsequent rows as duplicates. duplicated treats the origin row for any duplicates it finds The Keep Argumentīest for: fine tuning how.

duplicated method and how you can adjust them to fit your needs. Let's review the arguments available for the. However, you can modify this behavior by changing the arguments. It considers the first row to contain the matching data as unique. duplicated is to mark the second instance of a row as the duplicate (and any other instances that follow it). To return to the printout, this means we have four unique rows and two duplicate rows ("fork" and "spoon" at index positions 3 and 4). This means that any rows that are marked False are unique. In other words, it answers the question "Is this row duplicated?" with "Yes." If it finds an exact match to another row, it returns True. duplicated as asking the question "Is this row duplicated?" The method looks through each row to determine if all the data present completely matches the values of any other row in the DataFrame. The easiest way to interpret these results is to think of. duplicated() returns booleans organized into a pandas Series (also known as a column), which we can inspect by printing to the terminal: duplicated() on our DataFrame kitch_prod_df. duplicated method, we can identify which rows are duplicates: This will be our base DataFrame over the following tutorials: duplicated method.īelow is a product inventory of kitchen cutlery and utensils. To gather this intelligence, we'll turn to the aptly named. Then we can decide how best to deal with them.

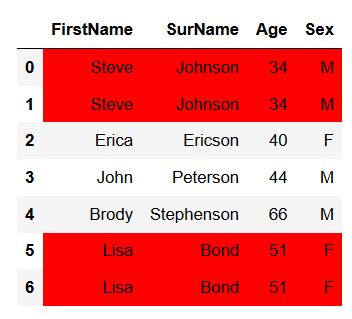

How to Count the Number of Duplicated Rows in Pandas DataFramesīest for: inspecting your data sets for duplicates without having to manually comb through rows and columns of dataīefore we start removing duplicate rows, it's wise to get a better idea of where the duplicates are in our data set. How to Drop Duplicate Columns in Pandas DataFrames.How to Drop Duplicate Rows in Pandas DataFrames.How to Count the Number of Duplicated Rows in Pandas DataFrames.drop_duplicates methods of the pandas library. The framework also has built-in support for data cleansing operations, including removing duplicate rows and columns. Pandas is an open-source Python library that optimizes storage and manipulation of structured data. At worst, duplicate data can skew analysis results and threaten the integrity of the data set.

Duplicate data takes up unnecessary storage space and slows down calculations at a minimum. Removing duplicate values from your data set plays an important role in the cleansing process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed